Overview

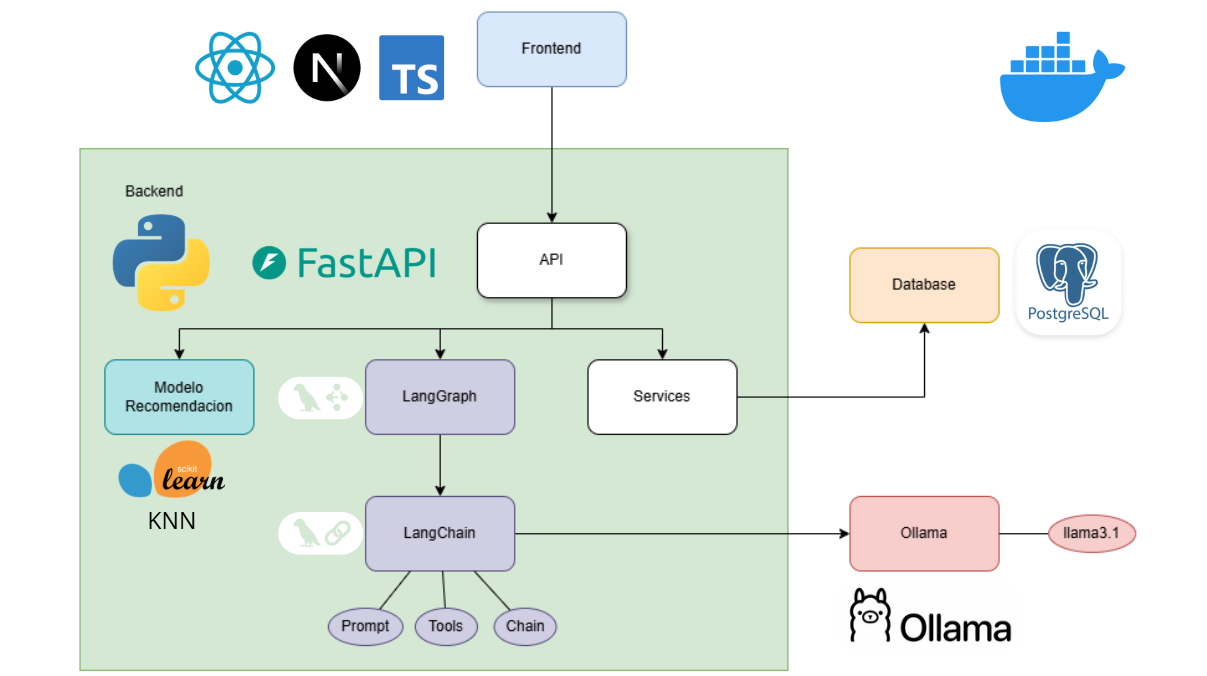

SmartEat AI is a full-stack nutrition planning platform that combines artificial intelligence, machine learning, and modern web technologies to deliver personalized meal recommendations and nutritional guidance. The system follows a microservices architecture with clear separation between frontend, backend, database, and AI services.Architecture Diagram

The system architecture consists of four main layers working together to deliver a seamless user experience:

System Components

Frontend Layer

Next.js application providing a modern, responsive user interface for user interaction and data visualization

Backend Layer

FastAPI REST API handling business logic, authentication, and data processing

Database Layer

PostgreSQL relational database storing user profiles, recipes, plans, and nutritional data

AI/ML Layer

LangChain agents, Ollama LLMs, and scikit-learn models powering intelligent recommendations

Component Details

Frontend Application

The frontend is built with Next.js and TypeScript, providing a modern single-page application experience:- App Router Architecture: Utilizes Next.js 16’s App Router for efficient routing and layouts

- Protected Routes: Authentication-based route protection for secure access

- Context Providers: React Context API for global state management (auth, profile)

- Responsive Design: Tailwind CSS for mobile-first, accessible UI components

- Real-time Chat: WebSocket-ready chat interface for AI agent interaction

- Dashboard: Daily meal tracking and nutritional metrics

- Profile: User biometrics, goals, and dietary preferences

- My Plan: Weekly meal plan visualization and management

- Chat: Conversational interface with Smarty AI agent

Backend API

The backend is a FastAPI application following clean architecture principles:- RESTful API: Well-structured endpoints following REST conventions

- JWT Authentication: Secure token-based authentication with bcrypt password hashing

- Database ORM: SQLAlchemy for type-safe database operations

- Alembic Migrations: Version-controlled database schema management

- Modular Services: Separated business logic, CRUD operations, and API routes

Database Schema

PostgreSQL serves as the primary data store with a normalized relational schema:- Users & Authentication: User credentials, profiles, and sessions

- Recipes: Nutritional information, ingredients, instructions, and dietary classifications

- Plans & Menus: Weekly meal plans, daily menus, and meal assignments

- Preferences: User dietary restrictions, tastes, and goals

AI & Machine Learning Services

The AI layer combines multiple technologies for intelligent nutrition guidance:- Conversational AI

- Recommendation Engine

- Embeddings & Search

- Data Pipeline

LangChain + LangGraph AgentSmarty, the conversational agent, uses LangGraph to orchestrate complex workflows:

- Multi-step reasoning for meal plan generation

- Context-aware conversation handling

- Dynamic plan modifications based on user requests

- RAG (Retrieval-Augmented Generation) for recipe knowledge

Communication Flow

User Request Flow

Data Persistence

All user data, recipes, and generated plans are persisted in PostgreSQL:- User profiles store biometric data and dietary preferences

- Plans link users to weekly meal assignments

- Meal details connect plans to specific recipes for each meal type

AI Integration

The backend integrates AI services through well-defined interfaces:- Ollama Service: Local LLM inference via HTTP API (port 11434)

- ML Model Service: In-memory KNN model for fast recommendations

- LangChain Agents: Stateful conversation management with tool calling

Deployment Architecture

Docker Containerization

The application is fully containerized using Docker Compose:Backend Container

smarteatai_backend

- Python 3.10+ with FastAPI

- Port 8000 exposed

- Volume-mounted for development

Frontend Container

smarteatai_frontend

- Node 20+ with Next.js

- Port 3000 exposed

- Hot-reload enabled

Database Container

smarteatai_db

- PostgreSQL 15

- Port 5432 exposed

- Persistent volume for data

Ollama Container

smarteatai_ollama

- Ollama LLM runtime

- Port 11434 exposed

- GPU acceleration support

Service Dependencies

Services are orchestrated with proper dependency management:- Frontend depends on Backend API availability

- Backend depends on Database and Ollama services

- Adminer (port 8080) provides database management UI

Scalability Considerations

The architecture supports horizontal scaling:- Stateless API: Backend can run multiple instances behind a load balancer

- Database Connection Pooling: SQLAlchemy manages connection efficiency

- Model Caching: ML models loaded once in memory per instance

- Async Processing: FastAPI’s async support for concurrent requests

Security Architecture

Authentication & Authorization

- JWT Tokens: Stateless authentication with signed tokens

- Password Hashing: Bcrypt with secure salt rounds

- Protected Routes: Frontend guards and backend middleware

- Token Expiration: Configurable session timeouts

Data Protection

- SQL Injection Prevention: SQLAlchemy ORM with parameterized queries

- Input Validation: Pydantic schemas for request/response validation

- Environment Variables: Sensitive configs stored in

.envfiles - CORS Configuration: Controlled cross-origin access

Performance Optimizations

Frontend

- Static Site Generation: Pre-rendered pages where applicable

- Code Splitting: Automatic route-based chunking

- Image Optimization: Next.js Image component with lazy loading

- Client-side Caching: Context API reduces unnecessary API calls

Backend

- Connection Pooling: Reusable database connections

- Async Endpoints: Non-blocking I/O for concurrent requests

- Model Preloading: ML models loaded at startup

- Query Optimization: Indexed database columns for fast lookups

AI Services

- Local Inference: Ollama eliminates API latency

- GPU Acceleration: NVIDIA support for faster LLM responses

- Context Window Management: Optimized prompt sizes for Llama 3.1

- Model Quantization: Efficient model sizes for 8GB VRAM

Monitoring & Observability

The system includes built-in health checks and logging for operational visibility.

- Health Endpoints:

/healthendpoints for service status - API Documentation: Auto-generated Swagger UI at

/docs - Database Admin: Adminer interface for data inspection

- Application Logs: Structured logging in backend services

Technology Stack Summary

The architecture leverages modern, production-ready technologies:| Layer | Technologies |

|---|---|

| Frontend | Next.js 16, TypeScript, React 19, Tailwind CSS |

| Backend | FastAPI, Python 3.10+, SQLAlchemy, Pydantic |

| Database | PostgreSQL 15, Alembic migrations |

| AI/ML | LangChain, LangGraph, Ollama (Llama 3.1), scikit-learn |

| DevOps | Docker, Docker Compose |

| Authentication | JWT, bcrypt |

Next Steps

Dive deeper into the specific technologies used in each layer