Introduction

Similarity-based RAG based on Vector-DB has shown big limitations in recent AI applications. Reasoning-based or agentic retrieval has become important in current developments. However, unlike classic RAG pipeline with embedding input, top-K chunks returns, and re-rank, what should an agentic-native retrieval API look like? For an agentic-native retrieval system, we need the ability to prompt for retrieval just as naturally as you interact with ChatGPT. Below, we provide an example of how the PageIndex Chat API enables this style of prompt-driven retrieval.PageIndex Chat API

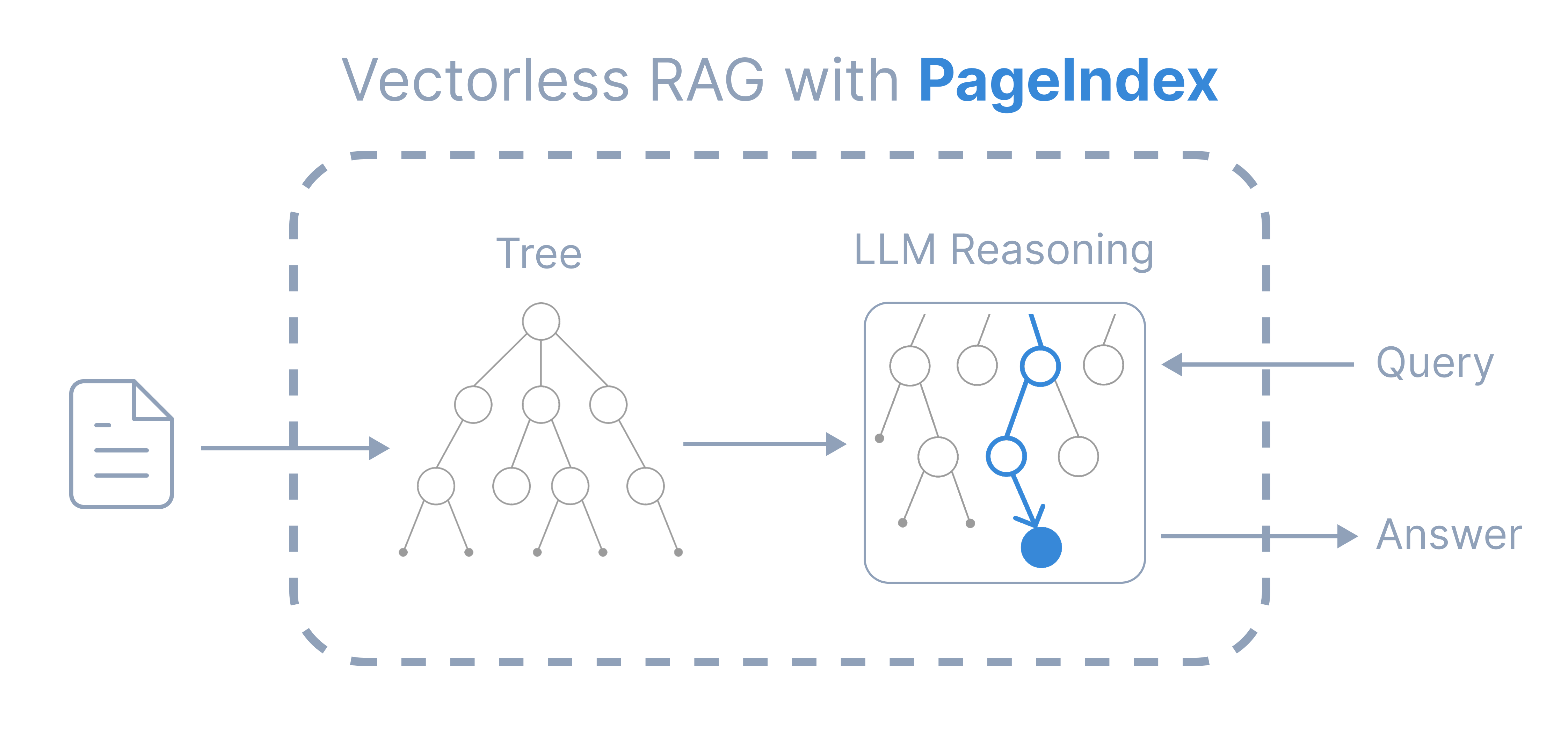

PageIndex Chat is an AI assistant that allows you to chat with multiple super-long documents without worrying about limited context or context rot problems. It is based on PageIndex, a vectorless reasoning-based RAG framework which gives more transparent and reliable results like a human expert. You can now access PageIndex Chat with API or SDK.

You can now access PageIndex Chat with API or SDK.

What You’ll Learn

This cookbook demonstrates a simple, minimal example of agentic retrieval with PageIndex. You will learn:- How to use PageIndex Chat API

- How to prompt the PageIndex Chat to make it a retrieval system

Setup

Upload a Document

Download and submit a document to PageIndex:Check Processing Status

Verify that the document has been processed:Ask Questions About the Document

Use the PageIndex Chat API to ask questions:Agentic Retrieval

You can easily prompt the PageIndex Chat API to be a retrieval assistant:Key Benefits

The PageIndex Chat API provides several advantages for agentic retrieval:Prompt-Driven

Natural language prompts for retrieval instead of vector similarity

Structured Output

Request specific output formats like JSON for downstream processing

Long Documents

Handle super-long documents without context limits

Reasoning-Based

Transparent retrieval based on document structure and reasoning